The AI Supply Chain Thesis: Why This Is an Industrial Revolution, Not Just a Software Cycle

Why AI is an industrial revolution — not just a software cycle — and what it means for investors tracking the physical infrastructure buildout from chips to power to trillion-dollar clusters.

The dominant narrative around artificial intelligence focuses on software: foundation models, chatbots, AI agents, and the race between OpenAI, Anthropic, Google, and Meta. But beneath every breakthrough in AI capability lies a massive, underappreciated physical reality — one that creates what may be the most compelling investment theme of the next decade.

AI is not just a software revolution. It is an industrial revolution. And like every industrial revolution before it, the greatest and most durable wealth will be created by those who build and supply the physical infrastructure.

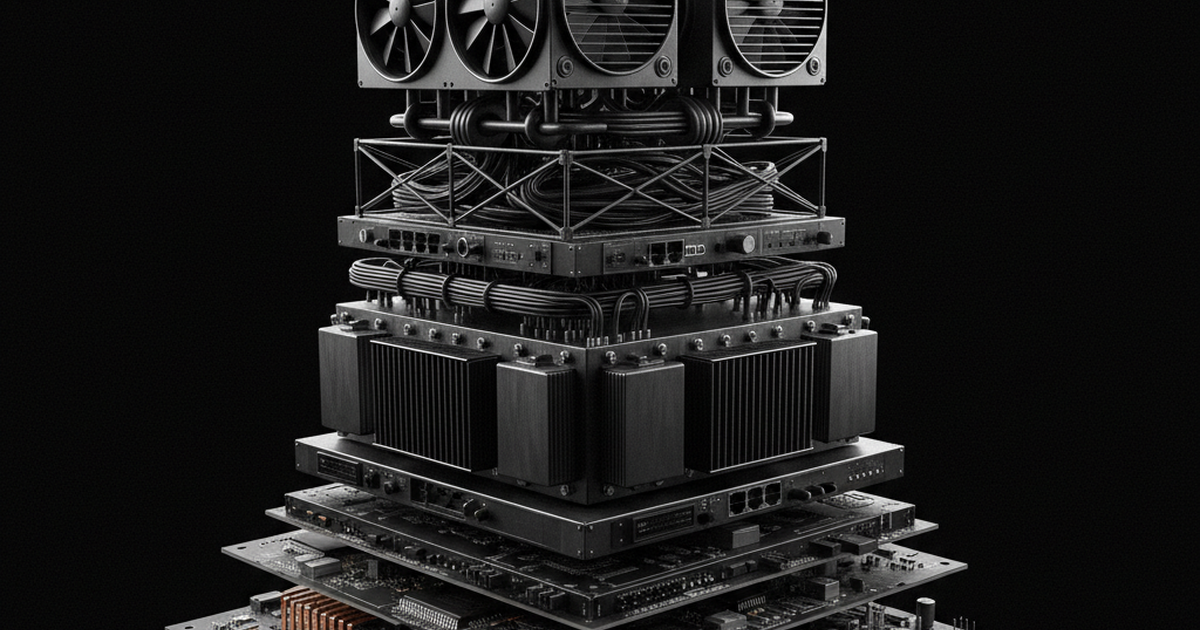

The Physical Reality of Intelligence

Training GPT-4 required an estimated 25,000 NVIDIA A100 GPUs running for roughly 100 days, consuming megawatts of power around the clock. The next generation of frontier models — GPT-5, Claude 4, Gemini Ultra 2 — will require 10x to 100x more compute.

Leopold Aschenbrenner’s Situational Awareness framework lays this out with startling clarity: by 2028, we should expect to see individual training clusters worth $100 billion or more. By 2030, trillion-dollar clusters become plausible. This isn’t science fiction — it’s the logical extrapolation of current scaling laws and the capital commitments already announced by Microsoft, Google, Amazon, and Meta.

These clusters don’t run on software alone. They require:

- Semiconductors: Hundreds of thousands of cutting-edge GPUs (NVIDIA Blackwell, AMD MI400) fabricated on TSMC’s most advanced process nodes, using ASML’s EUV lithography machines — of which fewer than 100 exist worldwide.

- Power: A single large AI data center can consume 100–500 megawatts. The industry’s projected power demand by 2028 exceeds 50 gigawatts — equivalent to adding a medium-sized country’s entire electrical grid.

- Networking: Training runs are distributed across thousands of GPUs that must communicate at terabit speeds. This demands next-generation optical interconnects, high-radix switches, and custom networking silicon from companies like Broadcom and Arista.

- Cooling and physical infrastructure: Liquid cooling is becoming mandatory. Rack power densities have jumped from 10kW to 100kW+, requiring entirely new thermal architectures from companies like Vertiv and Celestica.

This is not a software supply chain. This is an industrial supply chain, with long lead times, capacity constraints, and bottlenecks that create pricing power and durable competitive advantages.

The Trillion-Dollar Buildout

Consider the capital expenditure numbers. In 2024, the major hyperscalers (Microsoft, Google, Amazon, Meta) collectively spent over $200 billion on capital expenditures, with AI infrastructure as the primary driver. Goldman Sachs projects cumulative AI-related capex could exceed $1 trillion by 2028.

To put that in perspective: the entire U.S. shale oil revolution — which reshaped global energy markets over a decade — involved roughly $1 trillion in cumulative investment. The AI infrastructure buildout is approaching the same scale but on a compressed timeline.

This creates cascading demand through the supply chain. When Microsoft orders 500,000 NVIDIA GPUs, that order flows through:

- NVIDIA designs the chips and captures enormous margin

- TSMC fabricates them using the world’s most advanced process technology

- ASML supplies the lithography equipment that makes fabrication possible

- SK Hynix and Samsung provide the HBM (High Bandwidth Memory) stacked on each GPU

- Broadcom and Arista supply the networking to connect them

- Vertiv and Eaton provide power distribution and cooling

- Vistra, Constellation, and Cameco supply the electricity and nuclear fuel

Every layer of this stack represents an investment opportunity — and critically, many of these companies have structural advantages that are extremely difficult to replicate.

The Goldman 4-Phase Framework

Goldman Sachs has articulated a useful framework for thinking about AI investment phases:

- Phase 1 — Chips: The earliest and most obvious beneficiaries. NVIDIA has been the poster child, but TSMC, ASML, and Broadcom also sit here. This phase is well underway.

- Phase 2 — Infrastructure: Companies building the physical systems — data centers, power, cooling, networking. Vertiv, Eaton, Celestica, Arista. This phase is accelerating rapidly.

- Phase 3 — Platforms: Cloud providers and companies building AI-powered platforms that monetize the infrastructure. AWS, Azure, Oracle, CoreWeave, Palantir, ServiceNow.

- Phase 4 — Applications: Broad productivity gains across the economy. This phase is still largely ahead of us.

As of early 2026, we are firmly in the Phase 1–2 transition, with Phase 3 beginning to emerge. The infrastructure buildout is nowhere near complete. In fact, by most measures, we are still in the early innings.

Why This Is a Decade-Long Theme

Several structural factors suggest the AI supply chain investment theme has extraordinary duration:

Scaling laws continue to hold. There is no evidence of diminishing returns from compute scaling. Larger models continue to exhibit emergent capabilities, and the industry’s largest players are betting tens of billions that this trend persists.

Supply constraints create duration. Building semiconductor fabs takes 3–5 years. Permitting and constructing power plants takes 5–10 years. Nuclear power — increasingly viewed as essential for AI — operates on even longer timelines. These physical constraints mean the buildout cannot be rushed, extending the investment cycle.

The competitive dynamic is self-reinforcing. No major technology company can afford to fall behind in AI capability. This creates a capex arms race where each player must continue investing regardless of near-term returns. Microsoft has publicly stated it will spend whatever is necessary to maintain its AI infrastructure lead.

Geopolitics adds another dimension. The CHIPS Act, export controls on advanced semiconductors to China, and the reshoring of fabrication capacity add regulatory tailwinds to domestic supply chain companies. Taiwan’s geopolitical risk accelerates the case for supply chain diversification.

How ChipStack Approaches This

At ChipStack, we built our platform specifically to help investors navigate this complexity. We track 50+ companies across every layer of the AI supply chain, score them on supply chain positioning, revenue momentum, competitive moats, and risk factors, and deliver actionable intelligence weekly.

The AI supply chain thesis is not about picking one stock. It’s about understanding a system — mapping dependencies, identifying bottlenecks, and positioning across the full stack as the buildout unfolds over the next decade.

The physical infrastructure behind artificial intelligence represents one of the largest capital deployment cycles in history. The investors who understand the supply chain — not just the software — will be best positioned to capture it.

This is a free preview of ChipStack research. Subscribe to Pro for weekly supply chain briefs, company scorecards, and real-time thesis-breaking alerts.